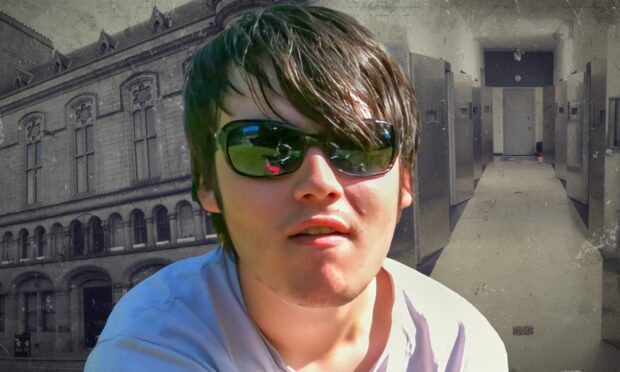

Police criticised as sheriff rules Aberdeen man’s death in custody ‘likely’ avoidable

Top block

Naked man seen running down A96 in Elgin hospitalised after being hit by HGV

Premium Content

The busy road was closed for several hours due to the collision.

Inverness man accused of ‘breach of the peace’ standoff with armed police

Premium Content

Allan Craig appeared in private at Inverness Sheriff Court following an alleged incident at Kenneth Street on Thursday afternoon.

‘Failing Fort William’: Five of town’s buildings reach crisis point

Parent raises fears over secondary school as water runs down walls and cladding falls from roof.

Academy Street: Court of Session legal challenge could fall at first hurdle

The owners of the Eastgate Centre have raised an action in the Court of Session.

New double yellow lines painted on Elgin roads provoke laughter and confusion

The new road markings do not appear to match the ones that were there previously.

It’s back to work for Fraserburgh care home residents as new shop opens

Staff in their 90s are serving up old-fashioned sweeties and selling copies of The P&J.

P&J Run Fest

Were you part of P&J Run Fest? Find all the runners and times here

Sport

P&J investigations

Our latest court reports

More from our crime and courts team